Flabbergast to know that the list includes - Netflix, Uber, Pinterest, Conviva, Yahoo, Alibaba, eBay, MyFitnessPal, OpenTable, TripAdvisor and much more. Market rules and big agencies already tend to use Spark for their solutions.

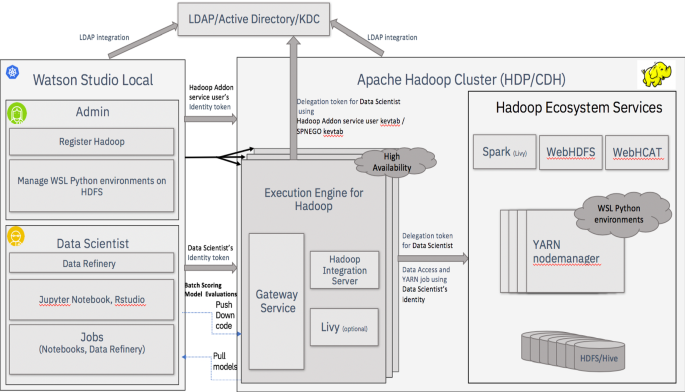

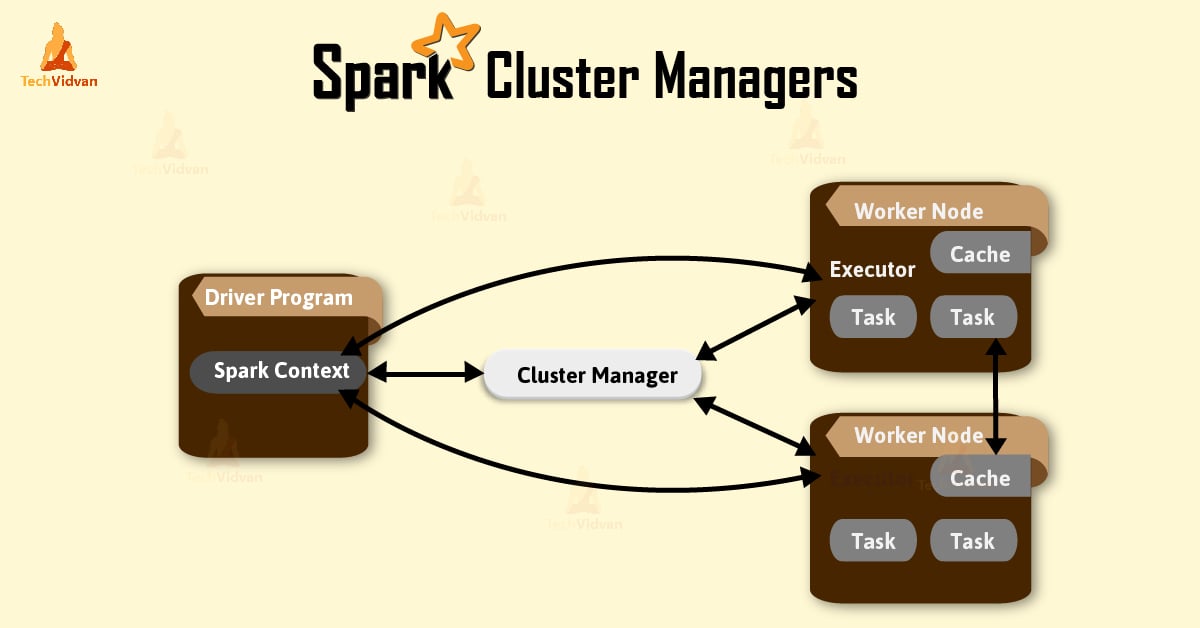

It was given to Apache programming establishment in 2013, and now Apache Spark has turned into the best level Apache venture from Feb-2014. It was Open Sourced in 2010 under a BSD license. Spark is one of Hadoop's sub venture created in 2009 in UC Berkeley's AMPLab by Matei Zaharia. Aside from supporting all these remaining tasks at hand in a particular framework, it decreases the administration weight of keeping up isolated apparatuses. Spark is intended to cover an extensive variety of remaining loads, for example, cluster applications, iterative calculations, intuitive questions, and streaming. Just because Spark has its own Cluster Management, so it utilizes Hadoop for Storage objective. Spark utilizes Hadoop in two different ways – one is for Storage and second is for Process handling. Apache Spark is a tool for speedily executing Spark Applications. It provides the set of high-level API namely Java, Scala, Python, and R for application development. What Apache Spark is AboutĪpache Spark is a lightning fast cluster computing system. The fundamental element of Spark is its in-memory clustering that expands the preparing pace of an application. It depends on Hadoop MapReduce and it stretches out the MapReduce model to effectively utilize it for more kinds of computations, which incorporates intuitive questions and stream handling. The below snapshot clearly justifies how Spark processing is rendering the limitation of Hadoop.Īpache Spark is an exceptionally a cluster computing technology, intended for quick computation.

But the fact is "Hadoop is one of the approaches to implementing Spark, so they are not the competitors they are compensators." Rumors around suggest that Spark is nothing but an altered rendition of Hadoop and isn't dependent upon Hadoop.

#Install apache spark on hadoop cluster hardware software#

Spark was presented by Apache Software Foundation for accelerating the Hadoop computational registering programming process and overcoming its limitations.